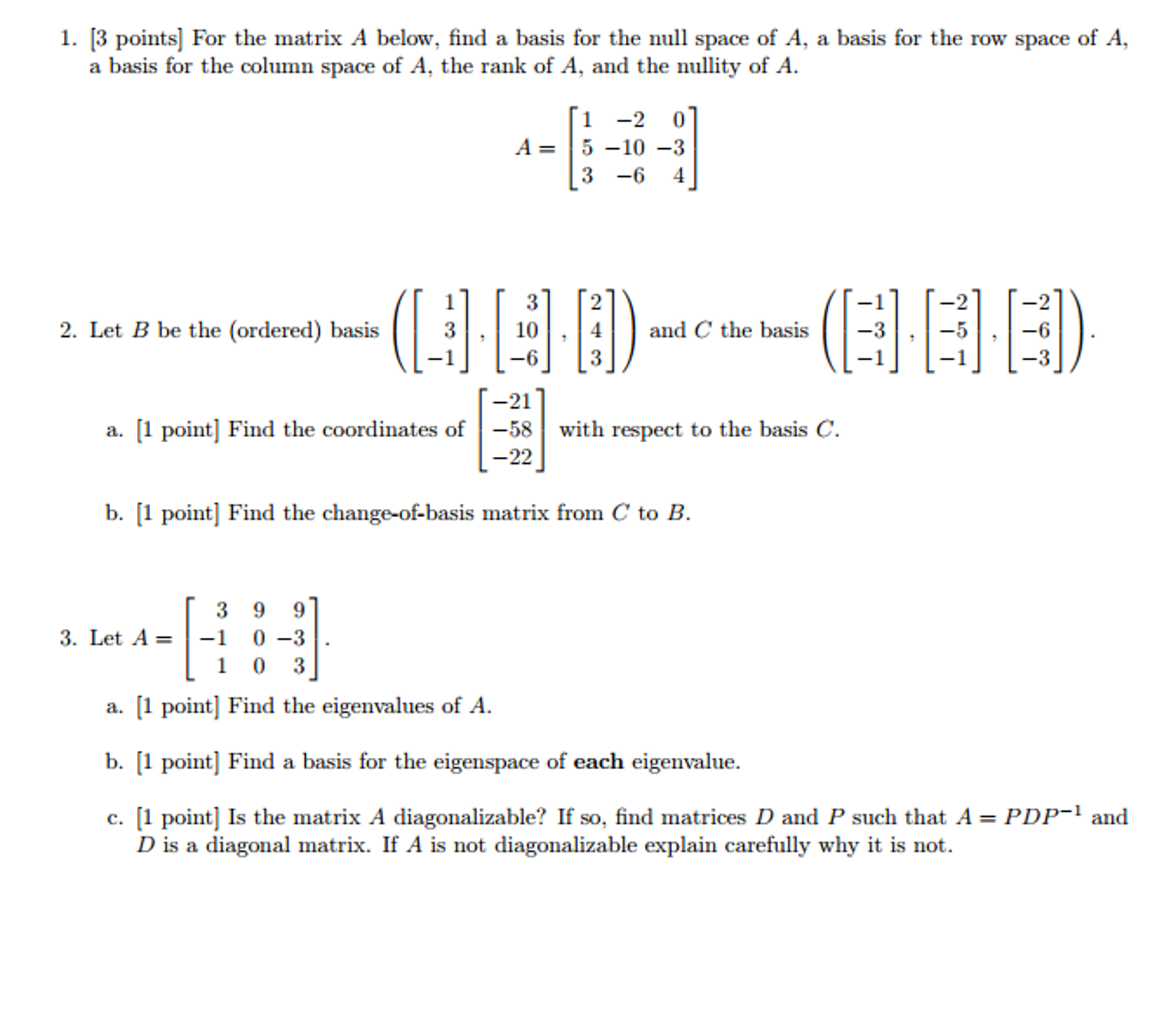

If A and B are square matrices of the same size, then the commutator (or. This, in turn, is identical to the dimension of the vector space spanned by its rows. (c) Suppose that a does not have the value you found in part (a) and that b. 1 2 3 This corresponds to the maximal number of linearly independent columns of A. If a subset of is linearly dependent as well. In linear algebra, the rank of a matrix A is the dimension of the vector space generated (or spanned) by its columns.Any set containing the zero vector is linearly dependent. The null space of A is the set of all vectors that are a member of - we generally say Rn, but this is a 3 by 4 matrix, so these are all the vectors that are going to be members of R4, because I'm using this particular A, such that my matrix A times any of these vectors is equal to the 0 vector.Two vectors are linearly dependent if and only if they are collinear, i.e., one is a scalar multiple of the other.If we multiply a column vector by a row vector, we will get a matrix. Note that a tall matrix may or may not have linearly independent columns. A diagonal matrix is a square, symmetric matrix that has zeros everywhere except on. Then A cannot have a pivot in every column (it has at most one pivot per row), so its columns are automatically linearly dependent.Ī wide matrix (a matrix with more columns than rows) has linearly dependent columns.įor example, four vectors in R 3 are automatically linearly dependent. Suppose that A has more columns than rows.

(Recall that Ax = 0 has a nontrivial solution if and only if A has a column without a pivot: see this observation in Section 2.4.) , v k are linearly independent, or will produce a linear dependence relation by substituting any nonzero values for the free variables. Solving the matrix equatiion Ax = 0 will either verify that the columns v 1, v 2. Let A be an n × n matrix, and let T: R n R n be the matrix transformation T (x) Ax. We will append two more criteria in Section 5.1. This is one of the most important theorems in this textbook. To solve a system of equations Axb, use Gaussian elimination. This is true if and only if A has a pivot position in every column. This section consists of a single important theorem containing many equivalent conditions for a matrix to be invertible. The null space of A is the set of all solutions x to the matrix-vector equation Ax0. Hints and Solutions to Selected Exercises.3 Linear Transformations and Matrix Algebra

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed